Big Data Sets are available since BellaDati 2.9 |

Big Data Sets are a special type of data sets which can be used to store very large amount of data and build pre-calculated cubes. The main differences between standard data sets and big data sets are:

Main advantage of Big Data Sets is the ability to create pre-calculated cubes resulting in rapid speedup of reports loading times. |

Please note that Big Data Set functionality needs to be enabled in the license and in the domain. |

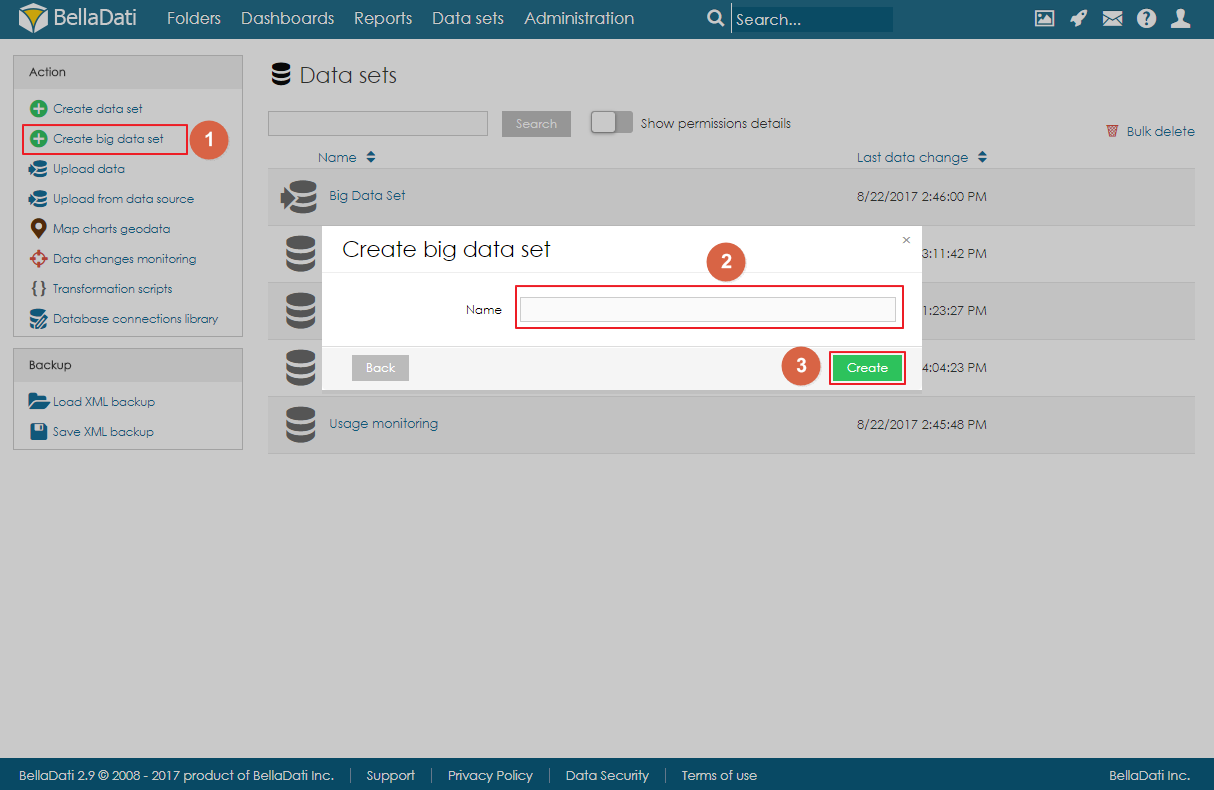

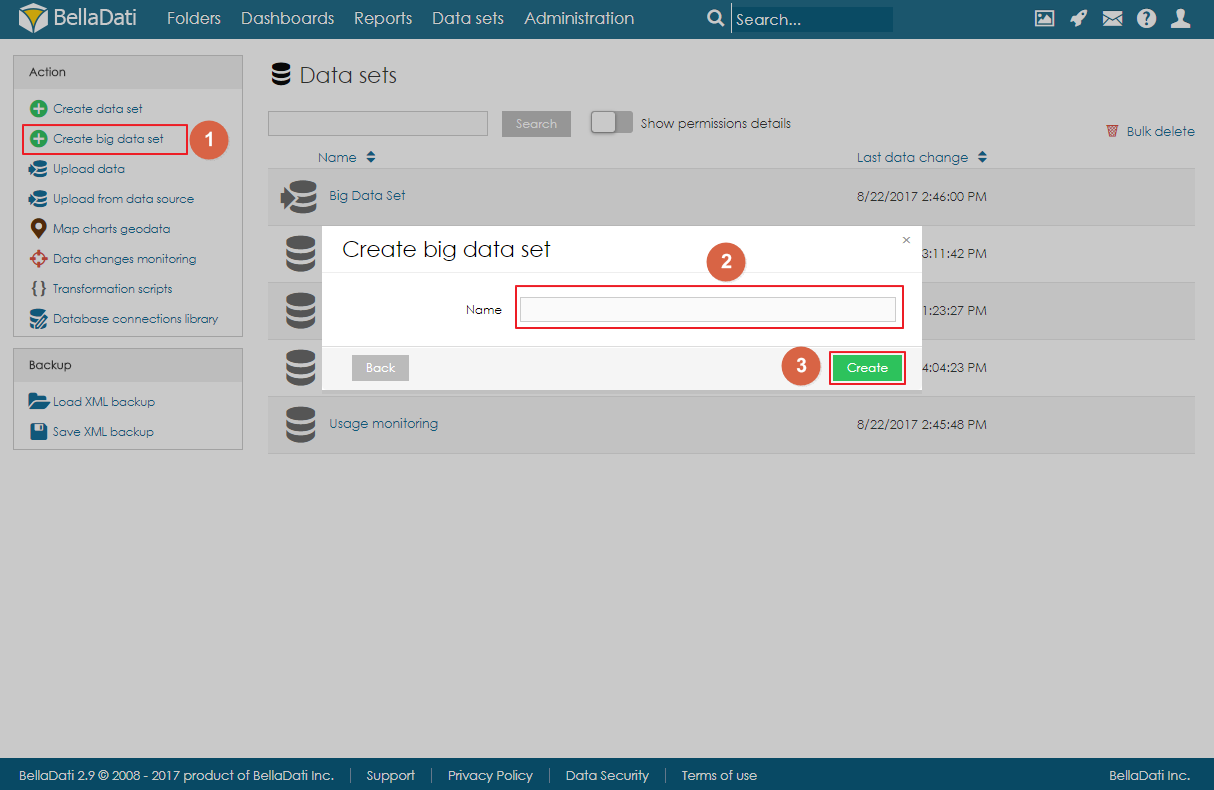

Big Data Set can be created by clicking on the link Create big data set in the Action menu on Data Sets page, filling in the name of the big data set and clicking on Create.

The landing page (summary page) is very similar to standard data set summary page. There are a left navigation menu and the main area with basic information about the data set:

Data can be imported to big the data the same way as to standard data set. Users can either import data from a file or from a data source. However, big data set is not using standard indicators and attributes, but instead, each column is defined as an object. These objects can have various data types:

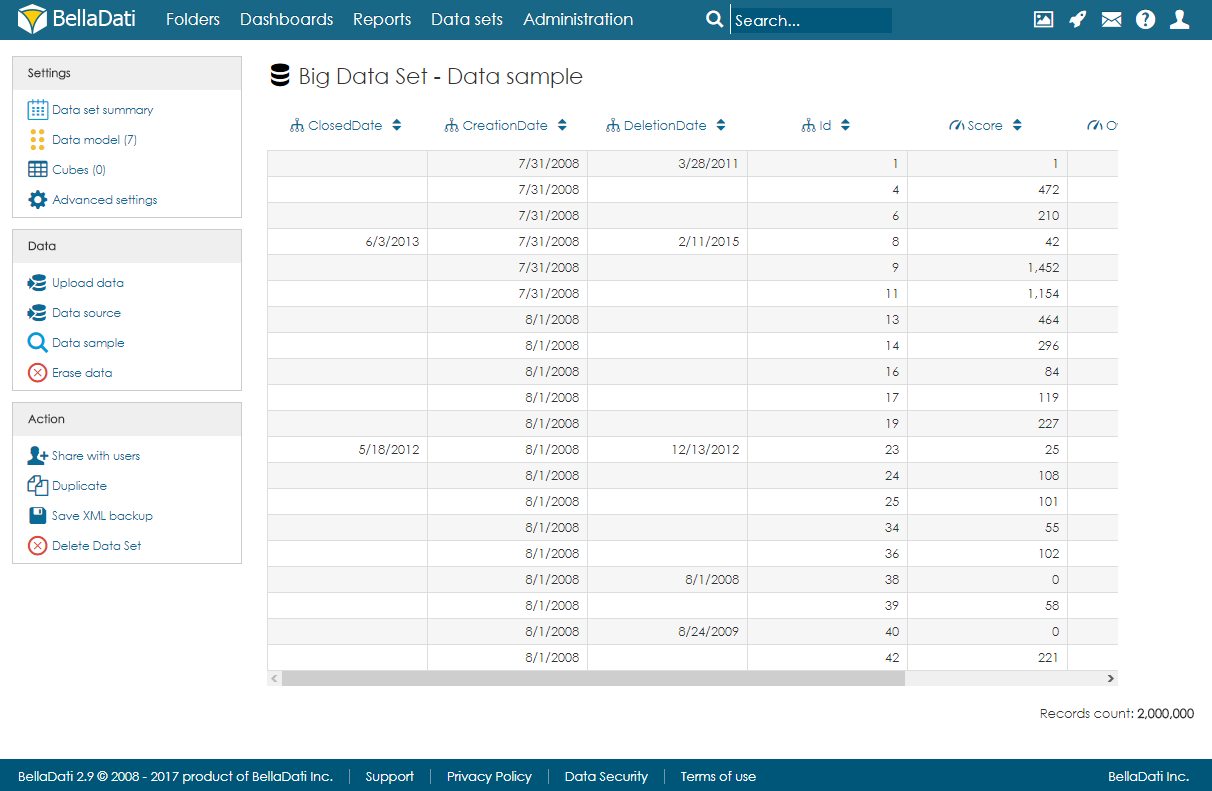

After import, users can open the data sample page to see a randomly selected part of the data.

Objects (columns) can be created automatically during the import or they can be defined on the data model page. When adding a new object, users can specify its name, data type, indexation and whether they can contain empty values or not. Please note that GEO point, GEO JSON, long text, boolean and numeric cannot be indexed.

Objects can be also edited and deleted by clicking on the row.

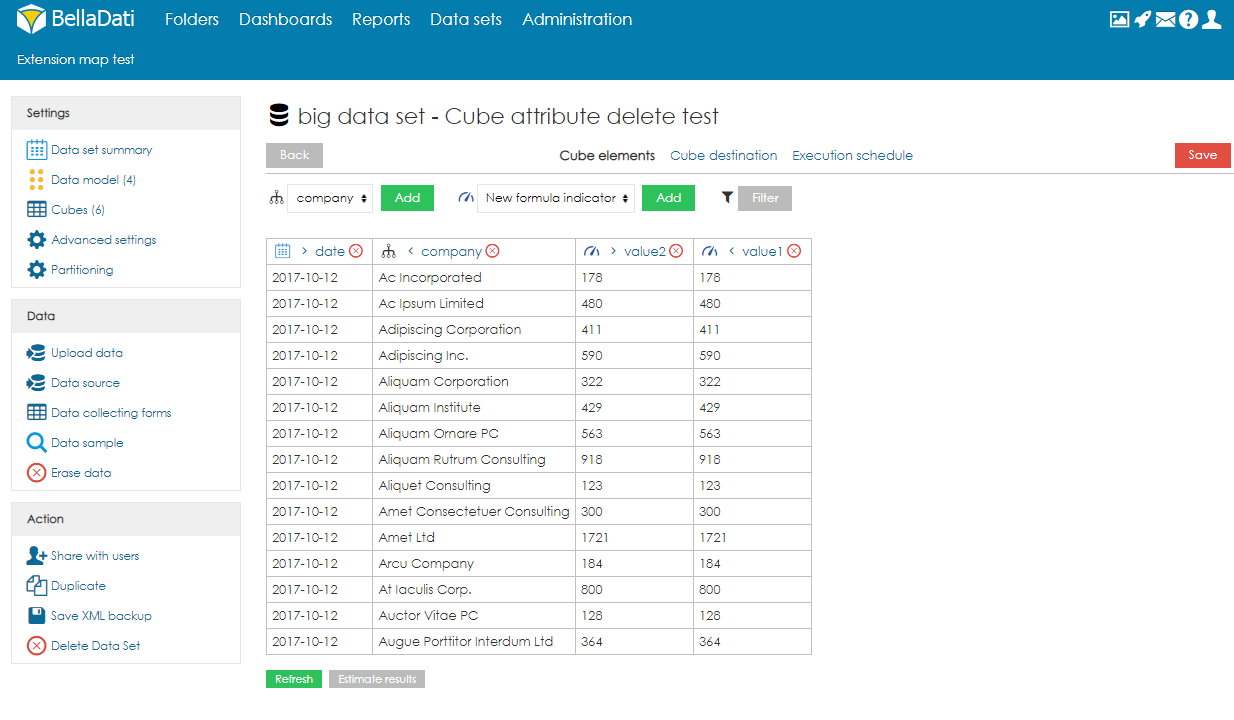

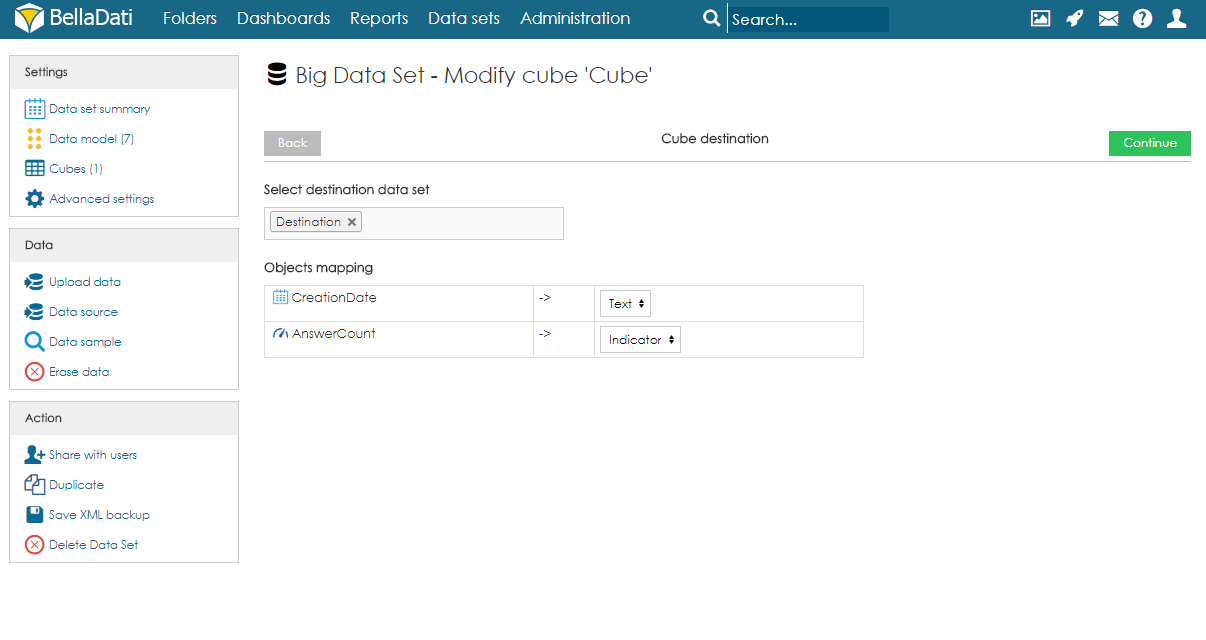

Cube is a data table which contains aggregated data from the big data set. Users can define the aggregation and also limit the data by applying filters. Data from the cube can be then imported to a data set. Each big data set can have more than one cube and each cube can have different settings.

To create a cube, users need to follow these steps:

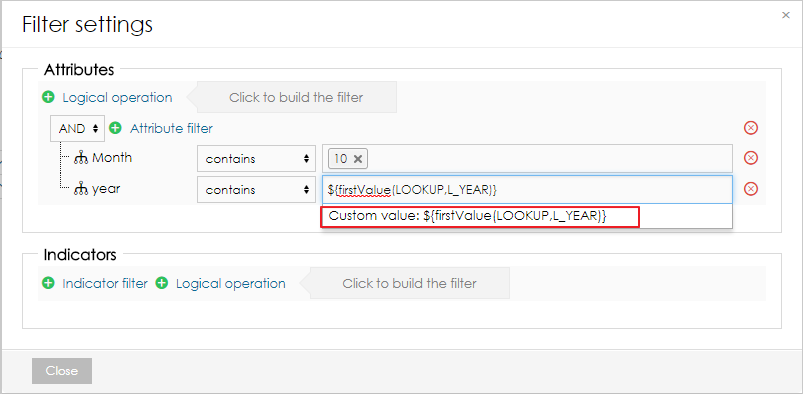

In the filters, users can reference first and last values from different data set by using following functions:

${firstValue(DATA_SET_CODE,L_ATTRIBUTE)}

${lastValue(DATA_SET_CODE,L_ATTRIBUTE)}

${firstValue(DATA_SET_CODE,M_INDICATOR)}

${lastValue(DATA_SET_CODE,M_INDICATOR)} |

The function has to be added as a custom value to the filter.

It is also possible to add a filter formula. This allows users to create more complex filter algorithms. It is also possible to use function getLastSuccessfulCubeExecution() to get the date and time of the last succeful cube execution.

def f = createFilter()

andFilter(f, 'M_TIMESTAMP_INDICATOR', 'GT', timestamp(datetime(getLastSuccessfulCubeExecution().toString('yyyy-MM-dd HH:mm:ss'))))

return f |

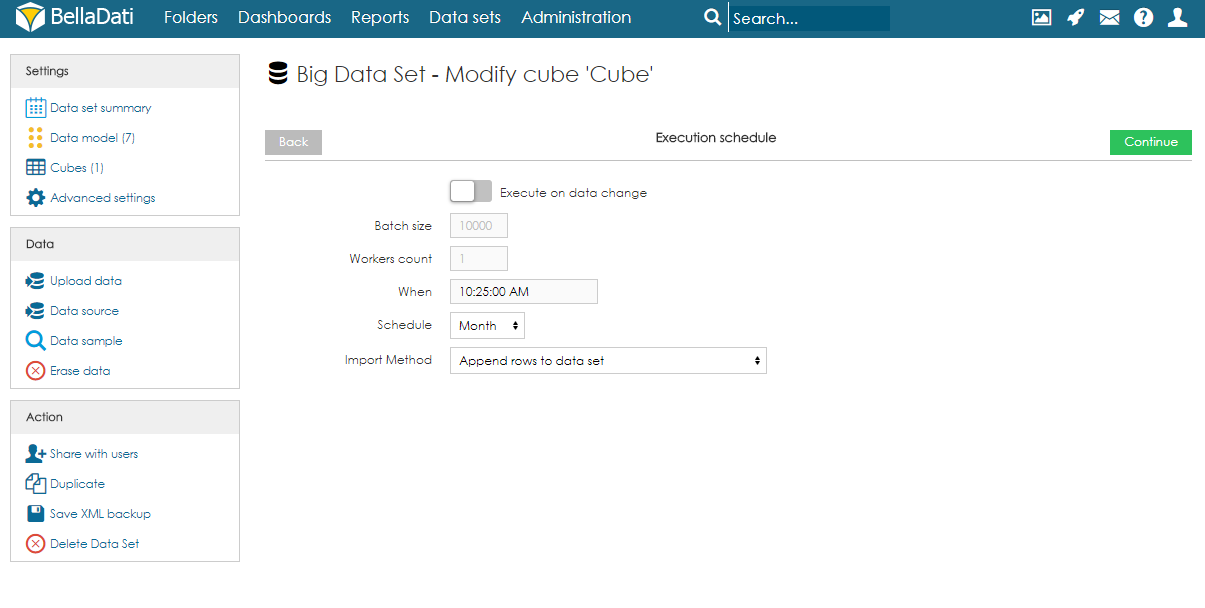

Batch size (default 1000) - the number of rows which will be executed in one batch. In special cases, it might be beneficial to increase or decrease the value. However, in most cases, we strongly suggest to leave in on default.

Workers count (default 8) - the number of workers which should be used for parallel execution.

When - time of the first execution.

Schedule - how often should be executed.

Import Method - what should happen with data in the destination data set. See Data overwriting policy for more information.

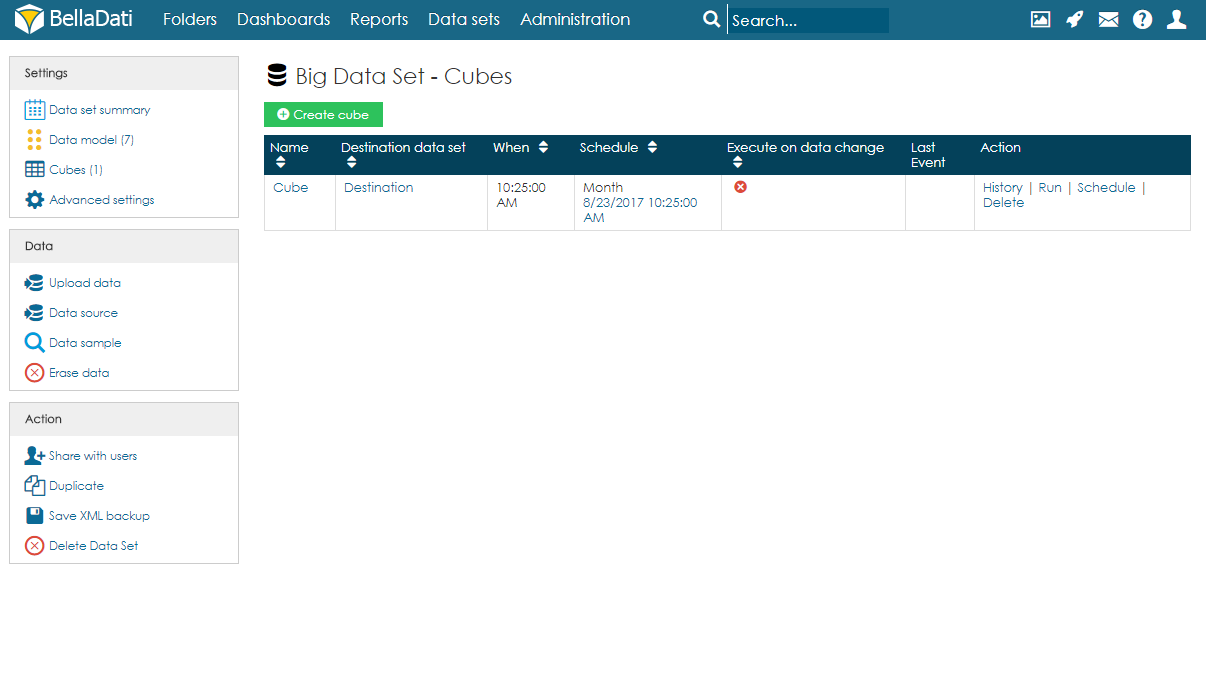

On the Cubes page, users can see a table with all cubes associated with the big data set. For each cube, information about the schedule and last event are available. Users can also edit the cube by clicking anywhere on the row or on the name of the cube. Several actions are also available for each cube:

As mentioned above, execution can be run manually, on data change or by schedule.

Users can also cancel the next scheduled execution by clicking on the date in the column Schedule and confirming the cancellation. Please note this will only cancel the execution and it won't delete it. After running the execution manually, the schedule will be restored. To delete the scheduled execution completely, users have to edit the cube and delete the Execution schedule.

When using the XML backup of big data set, the target data set and mapping in the cube is not stored. After restoring, they have to be set up again.